Nomic Embed: Training a Reproducible Long Context Text Embedder

Date : 2024-02-02

Abstract

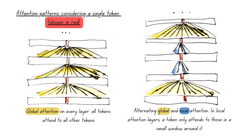

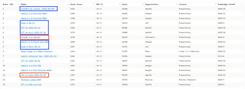

This technical report describes the training of nomic-embed-text-v1, the first fully reproducible, open-source, open-weights, open-data, 8192 context length English text embedding model that outperforms both OpenAI Ada-002 and OpenAI text-embedding-3-small on short and long-context tasks. We release the training code and model weights under an Apache 2 license. In contrast with other open-source models, we release a training data loader with 235 million curated text pairs that allows for the full replication of nomic-embed-text-v1.

Also read Nomic's blog post.

Research paper below links to GitHub repo

Recently on :

Artificial Intelligence

Information Processing | Computing

Research

WEB - 2024-12-30

Fine-tune ModernBERT for text classification using synthetic data

David Berenstein explains how to finetune a ModernBERT model for text classification on a synthetic dataset generated from argi...

WEB - 2024-12-25

Fine-tune classifier with ModernBERT in 2025

In this blog post Philipp Schmid explains how to fine-tune ModernBERT, a refreshed version of BERT models, with 8192 token cont...

WEB - 2024-12-18

MordernBERT, finally a replacement for BERT

6 years after the release of BERT, answer.ai introduce ModernBERT, bringing modern model optimizations to encoder-only models a...

PITTI - 2024-09-19

A bubble in AI?

Bubble or true technological revolution? While the path forward isn't without obstacles, the value being created by AI extends ...

PITTI - 2024-09-08

Artificial Intelligence : what everyone can agree on

Artificial Intelligence is a divisive subject that sparks numerous debates about both its potential and its limitations. Howeve...