Fine-tune ModernBERT for text classification using synthetic data

Date : 2024-12-30

Description

This summary was drafted with Gemini Experimental 1206 (Google)

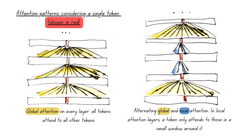

In this tutorial, David Berenstein looks to demonstrate the effectiveness of synthetic datasets generated by Large Language Models (LLMs). It showcases the use of a Hugging Face Space tool to create a synthetic dataset for text domain classification and then successfully fine-tunes a ModernBERT model on consumer hardware.

Read blogpost here

Recently on :

Artificial Intelligence

Information Processing | Computing

WEB - 2024-12-30

Fine-tune ModernBERT for text classification using synthetic data

David Berenstein explains how to finetune a ModernBERT model for text classification on a synthetic dataset generated from argi...

WEB - 2024-12-25

Fine-tune classifier with ModernBERT in 2025

In this blog post Philipp Schmid explains how to fine-tune ModernBERT, a refreshed version of BERT models, with 8192 token cont...

WEB - 2024-12-18

MordernBERT, finally a replacement for BERT

6 years after the release of BERT, answer.ai introduce ModernBERT, bringing modern model optimizations to encoder-only models a...

PITTI - 2024-09-19

A bubble in AI?

Bubble or true technological revolution? While the path forward isn't without obstacles, the value being created by AI extends ...

PITTI - 2024-09-08

Artificial Intelligence : what everyone can agree on

Artificial Intelligence is a divisive subject that sparks numerous debates about both its potential and its limitations. Howeve...