GQA: Training Generalized Multi-Query Transformer Models from Multi-Head Checkpoints

Date : 2023-05-22

Abstract

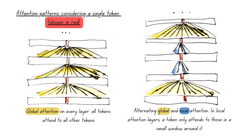

Multi-query attention (MQA), which only uses a single key-value head, drastically speeds up decoder inference. However, MQA can lead to quality degradation, and moreover it may not be desirable to train a separate model just for faster inference. We (1) propose a recipe for uptraining existing multi-head language model checkpoints into models with MQA using 5% of original pre-training compute, and (2) introduce grouped-query attention (GQA), a generalization of multi-query attention which uses an intermediate (more than one, less than number of query heads) number of key-value heads. We show that uptrained GQA achieves quality close to multi-head attention with comparable speed to MQA.

Research paper available here

Evaluation of Sports Performance: Cognitive Biases, Vectors an...

What Tech tells us about corporate culture

Recently on :

Artificial Intelligence

Research

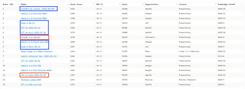

WEB - 2024-12-30

Fine-tune ModernBERT for text classification using synthetic data

David Berenstein explains how to finetune a ModernBERT model for text classification on a synthetic dataset generated from argi...

WEB - 2024-12-25

Fine-tune classifier with ModernBERT in 2025

In this blog post Philipp Schmid explains how to fine-tune ModernBERT, a refreshed version of BERT models, with 8192 token cont...

WEB - 2024-12-18

MordernBERT, finally a replacement for BERT

6 years after the release of BERT, answer.ai introduce ModernBERT, bringing modern model optimizations to encoder-only models a...

PITTI - 2024-09-19

A bubble in AI?

Bubble or true technological revolution? While the path forward isn't without obstacles, the value being created by AI extends ...

PITTI - 2024-09-08

Artificial Intelligence : what everyone can agree on

Artificial Intelligence is a divisive subject that sparks numerous debates about both its potential and its limitations. Howeve...